See Ralph Run

Remember Dick and Jane? “See Dick run. See Jane run. Run, Dick, run!” Those simple sentences taught millions of children to read. We’ve borrowed the formula for our orchestration layer, except our protagonist is an AI agent named Ralph, and instead of running through yards he’s running through codebases.

The Nano-Service Philosophy

When the orchestrator outgrew its home inside the Ikigai repository, we faced a choice: build one big monolithic tool, or split it into focused pieces. We went small.

The result is three tiny services, each doing exactly one thing:

- ralph-runs watches for queued goals and spawns agents to complete them

- ralph-logs streams log output to a browser so you can watch the work happen

- ralph-counts displays statistics from completed runs

Each service is a standalone repository. Each fits in a single file (well, ralph-runs is 800 lines of Ruby, but that’s still one file). Each can be started, stopped, and updated independently. No shared databases, no complex deployment pipelines, no coordination protocols. Just files on disk and processes talking to GitHub.

The Hub

Everything revolves around ~/.local/state/ralph/. This directory is the shared nervous system:

~/.local/state/ralph/

├── clones/ # Checked-out repos for running goals

│ └── mgreenly/

│ └── ikigai/

│ └── 565/ # One clone per goal

├── goals/ # Archived goal files (255 and counting)

├── logs/ # Orchestrator output

│ └── ralph-runs.log

└── stats.jsonl # Every completed run's metrics

When ralph-runs picks up a goal, it clones the repo into clones/<org>/<repo>/<goal-number>/. The agent works in that isolated directory, makes commits, and when done the orchestrator creates a PR and deletes the clone. If the goal fails, it gets re-queued and the clone is cleaned up for the next attempt.

The stats file is append-only JSONL. Every finished run adds a line with iterations, tokens, cost, time breakdowns, and lines changed. The ralph-counts dashboard reads this file and renders the numbers.

Ralph Runs

The orchestrator is the most substantial piece. You point it at one or more GitHub repos and tell it how many agents to run in parallel:

ralph-runs --max 3 --model sonnet --reasoning med \

git@github.com:mgreenly/ikigai.git \

git@github.com:mgreenly/other-project.git

It polls GitHub Issues for goals labeled goal:queued, picks them up in FIFO order, transitions them to goal:running, clones the repo, writes the goal body to a cache file, and spawns a ralph to do the work.

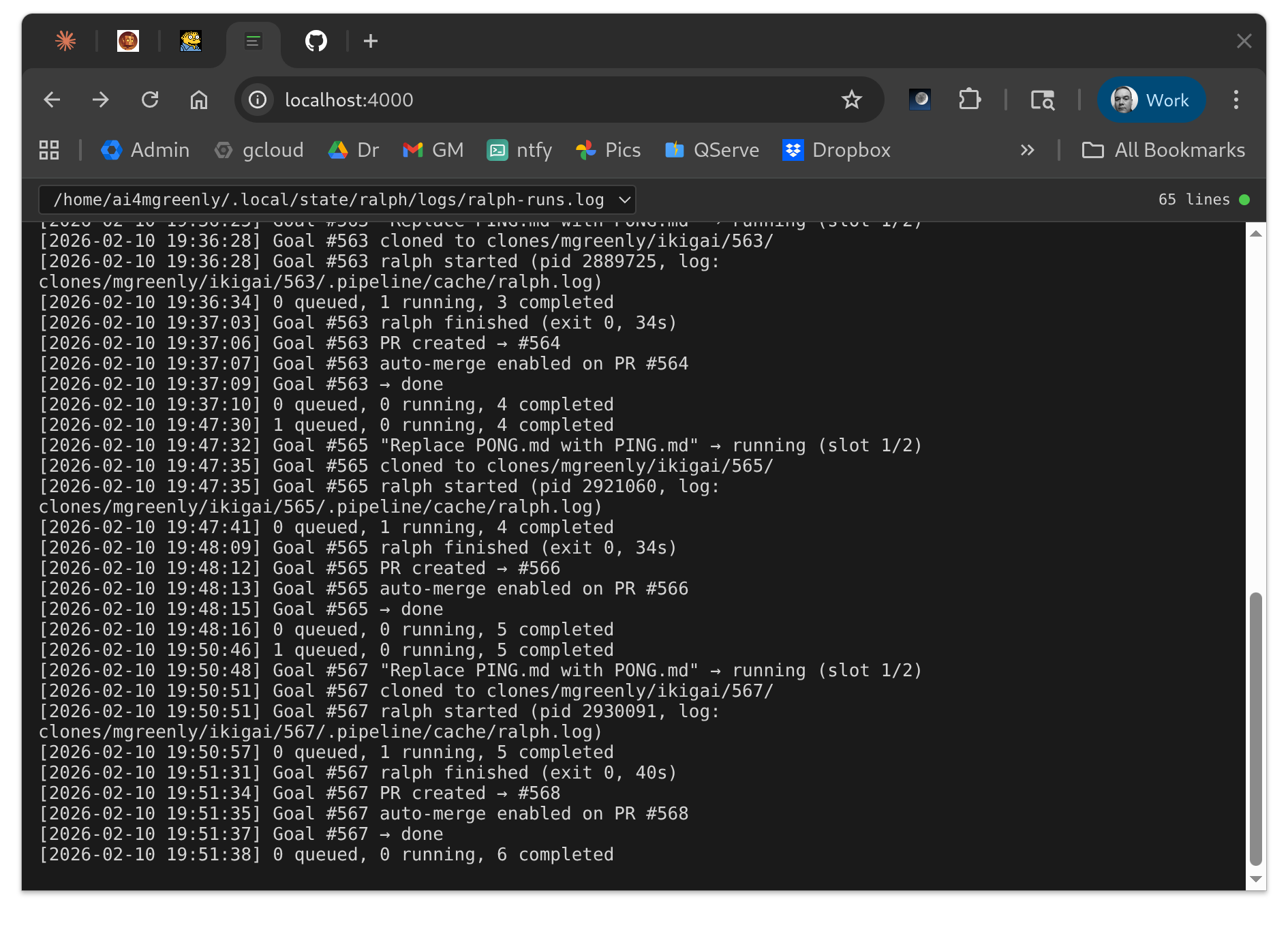

The screenshot shows the orchestrator grinding through goals. Each line is timestamped: goal cloned, ralph started with a PID, ralph finished (exit 0, 34s), PR created, auto-merge enabled, goal marked done. The rhythm is mechanical, which is exactly what we wanted. The orchestrator handles the lifecycle so I don’t have to.

On failure, goals get re-queued automatically (up to 3 retries). If a PR fails CI checks and gets labeled goal:retry, the orchestrator will pick it up, clone the PR branch, augment the goal with the failure context and PR comments, and let ralph try to fix it. Persistent failures transition to goal:stuck and send a notification.

Ralph Logs

Watching agents work is surprisingly useful. Not for supervision (they don’t need hand-holding) but for intuition. You start to recognize patterns: how long exploration takes, when an agent is stuck in a loop, what makes goals succeed or fail.

ralph-logs is a Go program that tails log files and streams them to a browser over WebSocket. You give it glob patterns for what to watch:

ralph-logs 4000 \

~/.local/state/ralph/logs/*.log \

~/.local/state/ralph/clones/*/*/.pipeline/cache/ralph.log

The first pattern catches the orchestrator log. The second catches every running ralph’s output. As agents spin up and complete, the file list updates automatically.

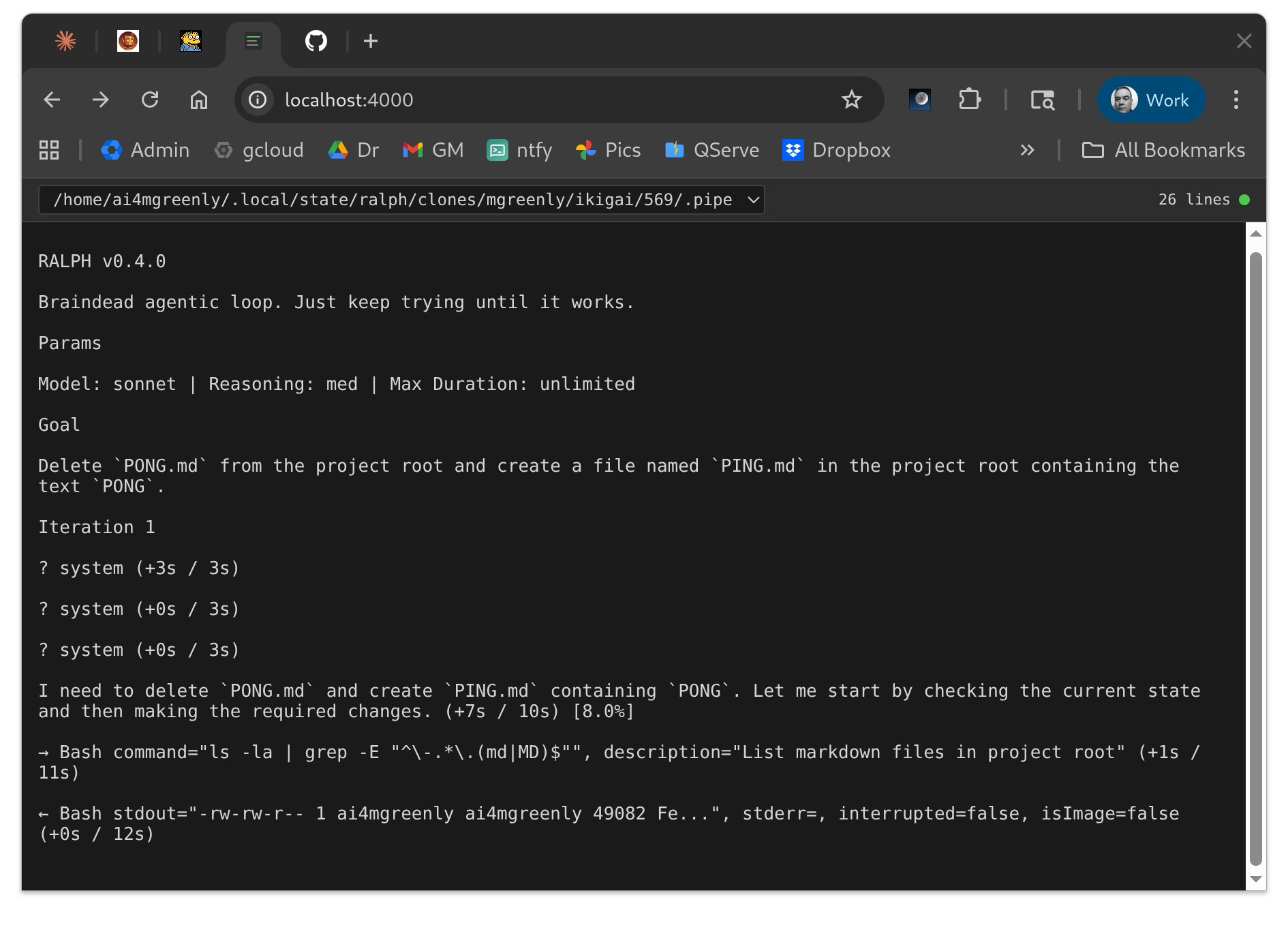

Here’s a ralph working on a trivial goal: delete one file, create another. The log shows the agent’s thinking (“I need to delete PONG.md and create PING.md”), the tool calls (Bash commands), and the results. For complex goals that run for hours, this view lets you check in without interrupting.

Ralph Counts

Numbers matter. When you’re spending real money on API calls and real time waiting for results, you want to know if your investment is paying off.

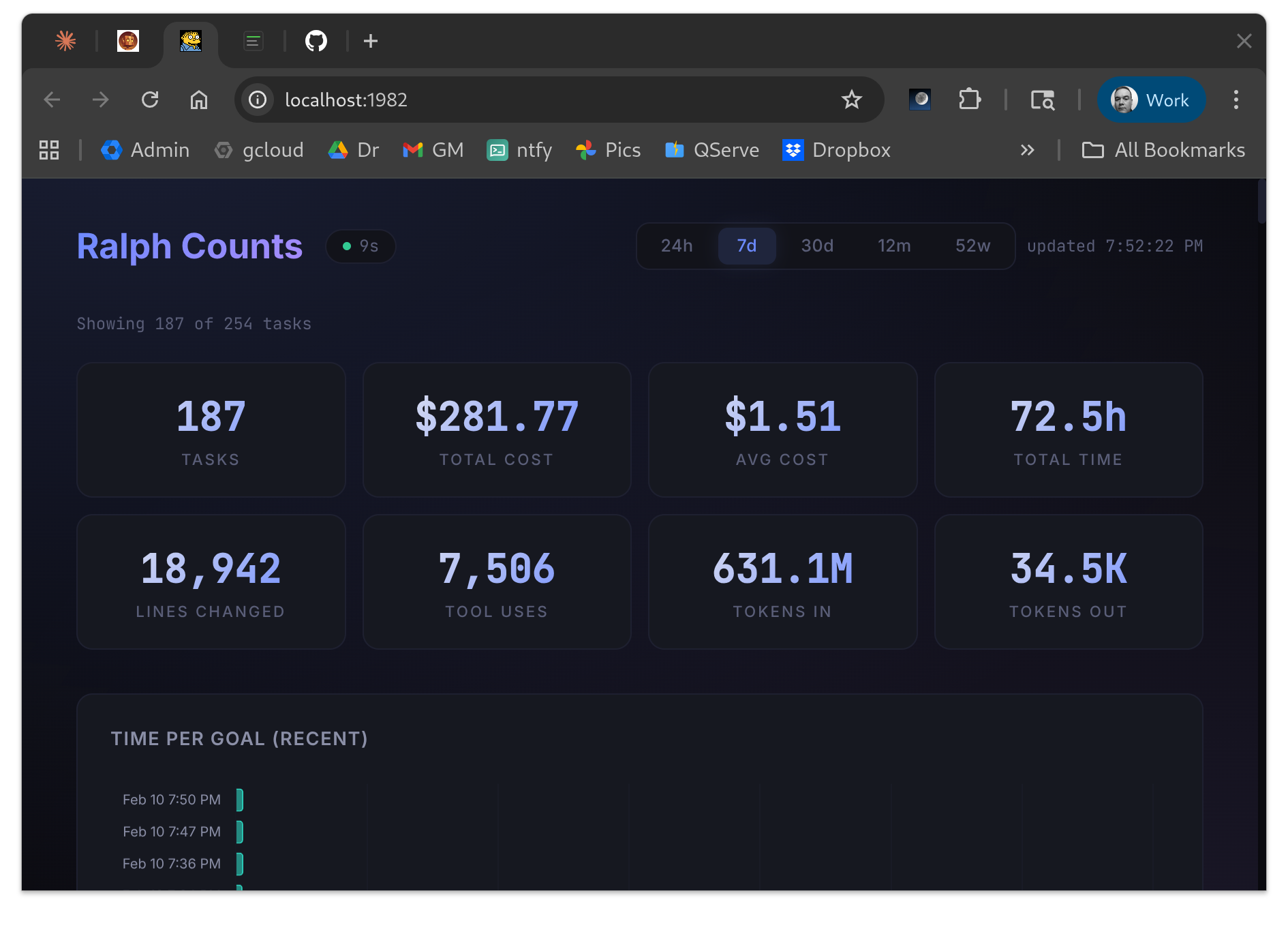

ralph-counts serves a dashboard that reads stats.jsonl and renders the aggregate picture:

Over the last week: 187 goals completed, $281.77 total cost, $1.51 average cost per goal, 72.5 hours of agent time, 18,942 lines changed across 7,506 tool invocations. The time-per-goal chart at the bottom shows recent runs, mostly completing in under a minute.

These numbers drive decisions. When average cost spikes, something changed (maybe goal complexity, maybe model pricing, maybe agent efficiency). When success rate drops, the goals or the tooling need attention. Without measurement, you’re flying blind.

Why Bother?

We’ve written about this before: the bottleneck in agentic development isn’t the agent, it’s the human. Every minute I spend on mechanical orchestration is a minute I’m not spending on goals that matter.

The nano-service approach emerged from that constraint. We didn’t set out to build a distributed system. We set out to automate the repetitive parts of running agents, and small focused tools were the fastest way to get there.

Each service took 15-30 minutes to build. ralph-logs is 300 lines of Go. ralph-counts is 50 lines of Python serving a static dashboard. ralph-runs is larger but still comprehensible in one sitting. When something breaks, there’s not much code to debug. When something needs to change, there’s not much code to change.

What’s Next

Goals still live in GitHub Issues. That works for now, but it means each project needs its own goal management skills, and there’s no unified view across repos. We’re considering a dedicated goal service that sits alongside the others, letting you create and manage goals independent of any particular repository.

The infrastructure keeps evolving. Every few days something that felt like overhead becomes automation. The direction is always the same: reduce the friction between having an idea and seeing it implemented.

See Ralph run. See Ralph log. See Ralph count. The names are silly, but the pattern is real.

Co-authored by Mike Greenly and Claude Code